How Do Providers Work on Akash Network? Understanding the Decentralized GPU Provider System

Akash Network Providers are resource providers in the decentralized cloud computing network. They are responsible for renting out GPUs, CPUs, and server resources to developers. As demand for AI model training and inference grows rapidly, the global GPU market has begun to face resource shortages and rising prices. More idle computing power is therefore entering decentralized markets to improve resource utilization.

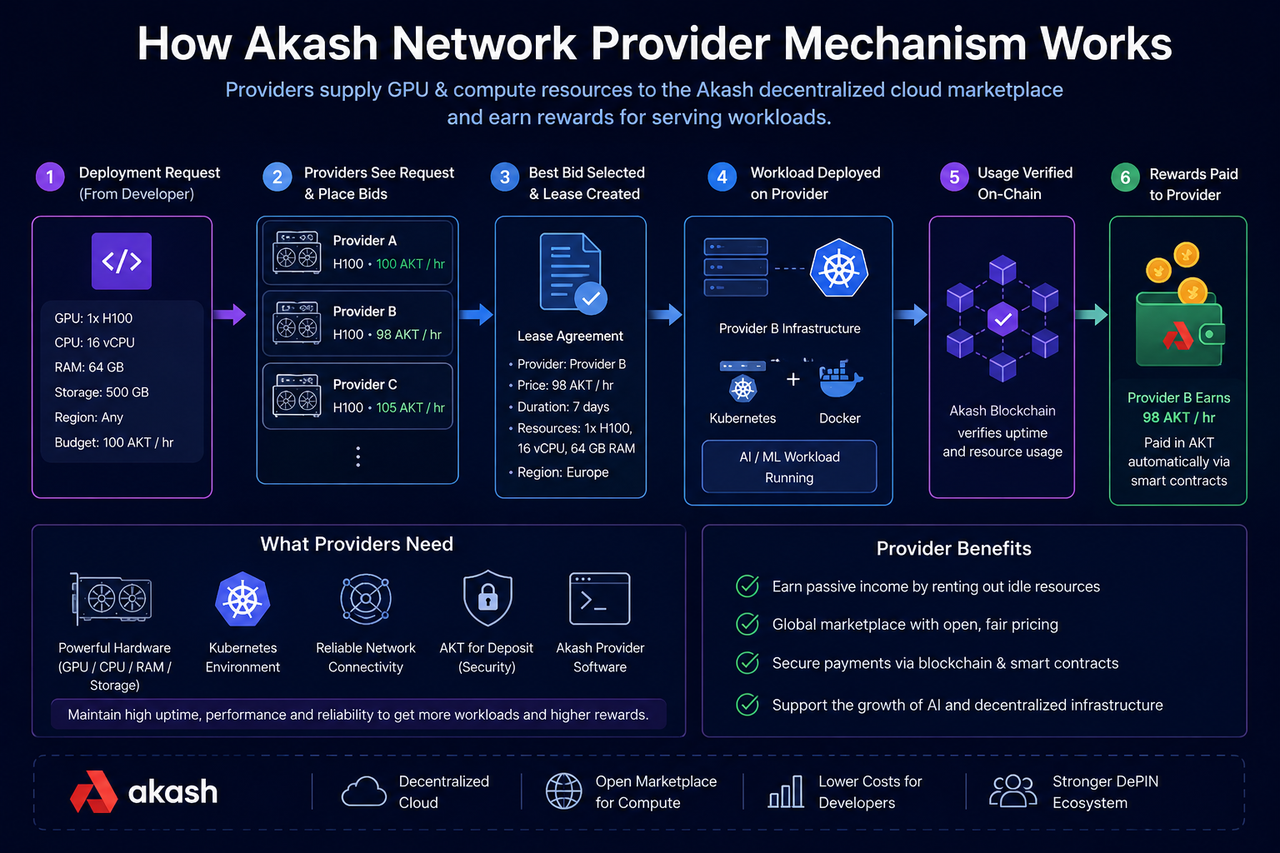

Against this trend, the Akash Provider mechanism has gradually become an important part of Web3 AI infrastructure. Unlike traditional cloud platforms, where a single company operates data centers, Akash allows individuals, mining farms, server rooms, and cloud service operators to participate directly in the GPU market. Through open bidding and on chain lease mechanisms, Providers can turn idle GPUs into tradable computing resources and earn corresponding revenue.

What Is an Akash Provider?

As node operators responsible for supplying computing resources on Akash Network, Providers can offer GPUs, CPUs, memory, storage, and network bandwidth to the network. These resources are usually used for AI model deployment, machine learning training, inference services, Web3 node operation, and high performance computing tasks.

Unlike traditional cloud platforms that manage servers centrally, Akash Providers can come from different regions around the world. In theory, any individual or organization with eligible hardware resources can join the network and provide computing power.

What Is the Core Role of an Akash Provider?

Providers are an important foundation that allows the Akash decentralized cloud marketplace to operate. After developers submit a Deployment request on the network, Providers participate in resource bidding based on those requirements and are responsible for actually running the corresponding workload.

Throughout this process, Providers take on several core responsibilities:

Providing Computing Resources

Providers supply GPU, CPU, and server resources used to run AI models and containerized applications.

Participating in Market Bidding

Providers need to submit bids based on resource configuration, GPU type, and market demand.

Running and Maintaining Nodes

After a lease is created, Providers need to keep servers running reliably and ensure that applications are deployed properly.

Earning Leasing Revenue

The GPU usage fees paid by developers are distributed to Providers according to the lease rules.

How Do Providers Join Akash Network?

Becoming an Akash Network Provider usually requires certain hardware and technical capabilities.

First, Providers need to deploy a Kubernetes environment, because Akash uses Kubernetes to manage containerized applications.

Second, nodes need to install the Akash Provider software and configure GPU drivers, network access, TLS certificates, wallet addresses, and resource settings. After node initialization is complete, Providers can publish server resources to the network and begin receiving Deployment requests from developers.

Some Providers also offer high performance GPUs such as NVIDIA A100 and H100 to participate in the AI model inference and training market.

How Does the Provider Bidding Mechanism Work?

Akash uses an open market bidding mechanism to allocate computing resources.

When a developer submits a Deployment, the system broadcasts the resource requirements across the entire network. Eligible Providers can then submit bids based on their available resources.

A bid usually includes:

-

GPU model

-

Number of GPUs

-

CPU and memory specifications

-

Resource price

-

Node region

-

Service availability

Developers can choose a suitable Provider from multiple bids, after which the system creates a Lease.

Because multiple Providers may compete for the same order, market prices usually change dynamically according to GPU supply and demand.

What Happens After a Lease Is Created?

A Lease is an on chain resource leasing agreement in Akash Network.

When a developer accepts a Provider’s bid, the system automatically creates a Lease and records the two parties, GPU resource configuration, leasing period, payment rules, and deployment status. After the Lease is created, the Provider automatically pulls the container image submitted by the developer and deploys the corresponding workload on its server.

The entire process usually relies on Kubernetes and Docker container technology, allowing developers to run AI services much like they would on a traditional cloud platform.

How Do Providers Earn Revenue?

The main source of Provider revenue is leasing fees from GPU and server resources.

While developers use the resources, they need to keep paying the corresponding fees, and Providers receive revenue according to the lease.

Revenue levels are usually related to the following factors:

| Factor | Explanation |

|---|---|

| GPU Model | High end GPUs such as H100 and A100 usually generate higher revenue |

| Node Stability | Providers with higher uptime are more likely to receive orders |

| Network Bandwidth | Stronger network performance is helpful for AI inference tasks |

| Market Demand | AI growth increases demand for GPU leasing |

| Regional Location | Some regions may be more attractive to developers |

How Are Akash Providers Different From Traditional Cloud Service Providers?

The biggest difference between Akash Providers and traditional cloud platforms lies in how resources are organized.

Traditional cloud platforms are usually built and operated by large enterprises through centralized data centers, while Akash allows different participants around the world to freely provide computing resources.

This model has several characteristics:

| Comparison Dimension | Akash Provider | Traditional Cloud Service Provider |

|---|---|---|

| Resource Ownership | Distributed | Centralized |

| Pricing Mechanism | Market bidding | Platform pricing |

| Entry Barrier | Open participation | Enterprise grade operations |

| GPU Source | Global idle resources | Official data centers |

| Resource Expansion Method | Dynamic expansion | Centralized construction |

However, traditional cloud platforms still have strong advantages in stability, enterprise support, and service systems.

What Challenges Do Akash Providers Face?

Although the Provider mechanism makes the GPU market more open, it still faces some practical challenges.

First, Providers need certain technical capabilities, including Kubernetes operations, GPU management, and network configuration.

Second, hardware quality and stability may vary across different Providers, which can also affect the developer deployment experience.

In addition, competition in the AI GPU market is intensifying quickly, with projects such as io.net, Render, and Gensyn also building in the decentralized compute market.

In the future, Provider service stability, resource scale, and the developer ecosystem may become important factors affecting Akash’s long term competitiveness.

Summary

The Akash Network Provider mechanism integrates GPU and server resources around the world through an open marketplace, allowing developers to access AI computing power in a more flexible way.

Providers earn revenue by participating in Deployment bidding, deploying workloads, and maintaining server operations, while the blockchain is responsible for resource orders and lease settlement. This model not only improves the utilization of idle GPUs, but also supports the development of decentralized AI infrastructure.

FAQs

Who Can Become an Akash Provider?

In theory, any individual, mining farm, or data center with eligible hardware resources and technical capabilities can become a Provider.

How Do Providers Earn Revenue?

Providers earn revenue by renting GPU and server resources to developers. Fees are usually settled in AKT or stablecoins.

Why Do Providers Need Kubernetes?

Akash uses Kubernetes to manage containerized applications, so Providers need to run a Kubernetes environment to deploy developer workloads.

How Are Akash Providers Different From Traditional Cloud Platforms?

Traditional cloud platforms are operated centrally by companies, while Akash Providers use an open marketplace model that allows different participants around the world to freely provide computing resources.

Do Akash Providers Mainly Serve AI Applications?

At present, AI inference, large language model deployment, and machine learning tasks have become some of the main application scenarios for Akash Providers.

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?