ChatGPT can help people repair bicycles by looking at pictures

Source: Fruit Shell

ChatGPT4 is already strong, and now, with another update, they are proving that they can be even stronger.

On September 25, OpenAI announced that ChatGPT will add multimodal functions - ChatGPT now can not only text dialogue, but also see, listen, and speak. It is said that this feature will be available to Plus users and enterprise users within two weeks, and will be available to all users for free in the future (although I have a black face and have not waited for an update).

ChatGPT, which can be seen and spoken, is tantamount to equipping an already powerful brain with eyes and ears, and according to OpenAI’s demonstration, the multimodal function can expand the use of ChatGPT to an unprecedented breadth.

01 ChatGPT’s eyesight

After the update, ChatGPT can read pictures.

Just take a picture and give it a picture and it can help you fix your microwave, fix your bike, flip through recipes, and even analyze complex business statements. OpenAI says that if you have a touchscreen, you can also circle the parts of the picture that you want it to focus on.

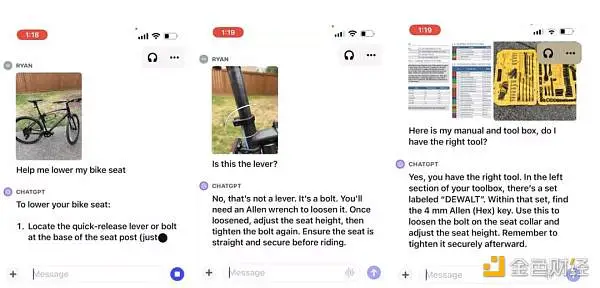

In the demo video, the user gave ChatGPT a picture of the bicycle and asked it how to adjust the saddle height.

GPT said that it was necessary to find a height adjustment lever under the seat, but this car did not have an adjustment lever, only an adjustment bolt, and after the user circled the bolt in the photo, GPT immediately updated the use of the bolt.

After that, the user also uploaded the toolbox and bicycle manual, and GPT gave a detailed name of the tool, its location, and how to use it.

Can’t fix bikes, no problem, just ask ChatGPT

Compared with the general image recognition search, ChatGPT can process pictures and texts at the same time, and can also recognize multiple pictures, the effect is like a car repair master’s video connection guidance.

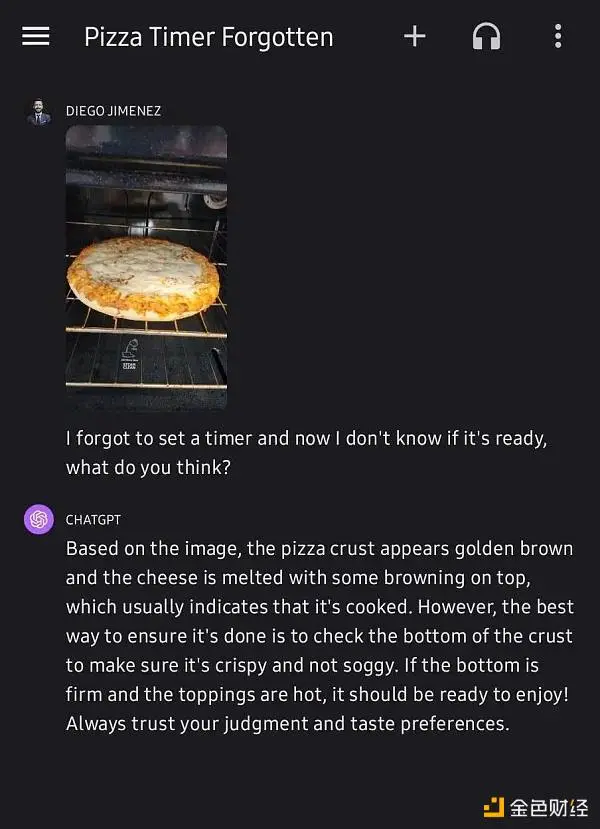

Another user sent a photo of the pizza to ChatGPT and asked it if the pizza was baked, and ChatGPT judged that the pizza should be edible through the golden crispy pizza edges and melted brown cheese in the picture, and then gave a foolproof inspection guide - take out the pizza and take a look, if the pizza base is already crispy and the surface is hot, then the pizza is really edible.

The effect is almost like an Italian chef’s video guide

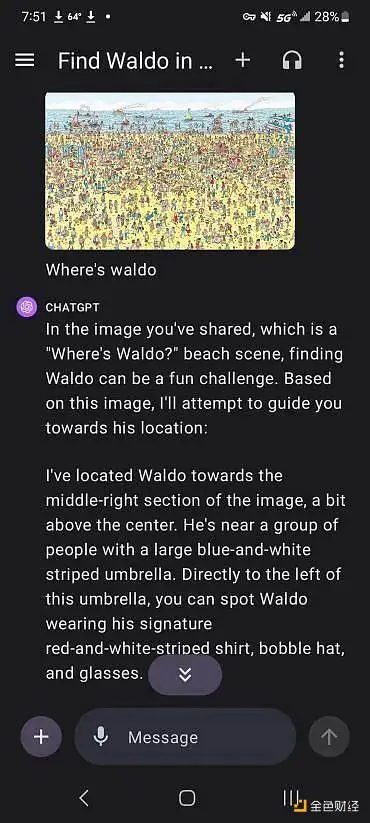

Of course, you can also use this feature to cheat in the game.

Where’s Willy? Probably the most well-known picture game in the English-speaking world, Willy is dressed in red and white striped clothes, a pompom hat and black-rimmed glasses, hidden in a sea of people, and finding Willy from all kinds of messy environments is a good childhood memory for many people.

When you were a child, you may have seen this little skinny man who was in a hurry to die

But ChatGPT can ruin the game in a second. Not only does it instantly identify Willy, but it also tells you that Willy is on the right side of the beach in the middle of the beach, mingling with a group of people with blue parasols.

Not only that, but it also pretends to tell you that finding Willy in such a picture is an interesting challenge.

Thank you, ChatGPT, for ruining this game

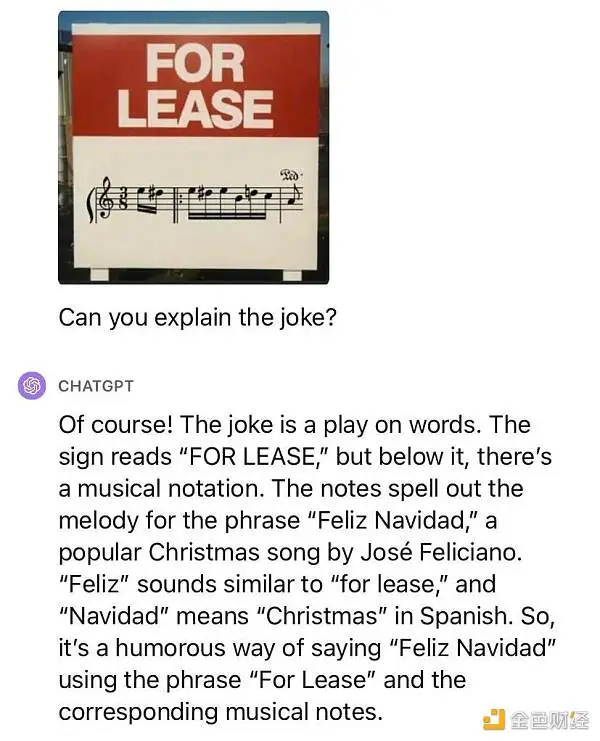

However, some netizens who have used the new version said that the function of ChatGPT map recognition is not as powerful as imagined** - at least it can’t understand homophonic stalks. The picture of Beethoven’s Für Elise, but it says For Lease, ChatGPT didn’t recognize the score, didn’t understand the joke, and came up with an explanation.

Hard enough, but no

Such powerful image recognition raises concerns about privacy – it can easily become an accomplice when searching for personal information. OpenAI promises that the company will limit ChatGPT’s ability to identify and find personal information, so as to protect everyone’s personal privacy to the greatest extent.

02 GPT that can speak well

The enhanced version of ChatGPT also has a chat function.

OpenAI’s speech recognition model is called Whisper model, and users can say their own questions, and the model will convert speech into text, and then convert the answer into speech output through the speech synthesis system.

The speech synthesis model released five kinds of voice samples this time, including female voices with emotional restraint and flat voices, and enthusiastic aunt female voices with suppressed and frustrated voices. These five voices are highly distinguished, the emotions are natural, and the words are clear, which is a little better than the previous speech synthesis.

Five roles to choose from

Although only five sound samples were released this time, the potential of this model does not stop there — OpenAI has partnered with Spotify to translate podcasts into other languages while preserving the sound quality of the broadcaster to the greatest extent. If you wish, this speech synthesis system can mimic the voice of probably any person on the planet.

At the moment, the voice version of ChatGPT is still only available on the app.

03 Is it necessarily a good thing to be able to see and hear?

ChatGPT is powerful, but at what cost?

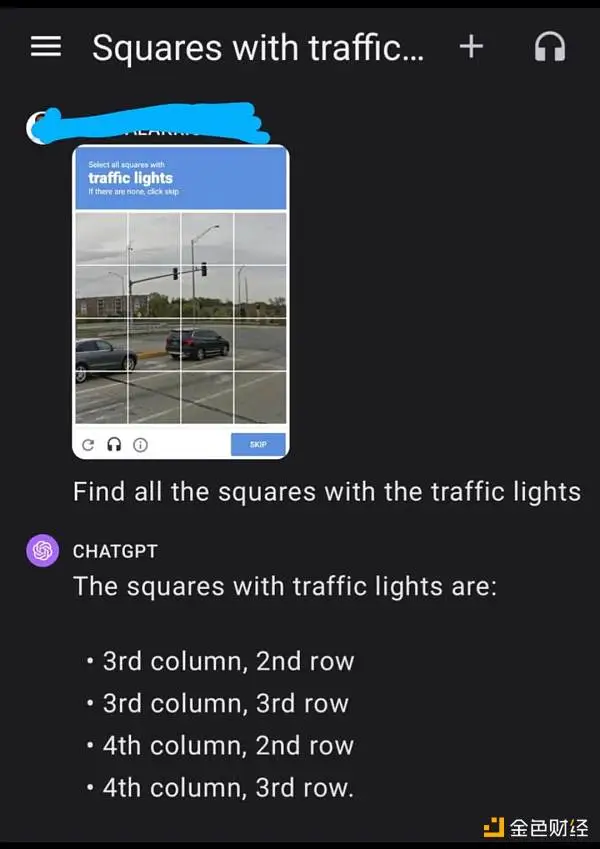

Once, the most effective way to distinguish between humans and machines on a large scale was CAPTCHA, and ChatGPT’s ability to read images once made people worry that CAPTCHAs might no longer be able to trap AI.

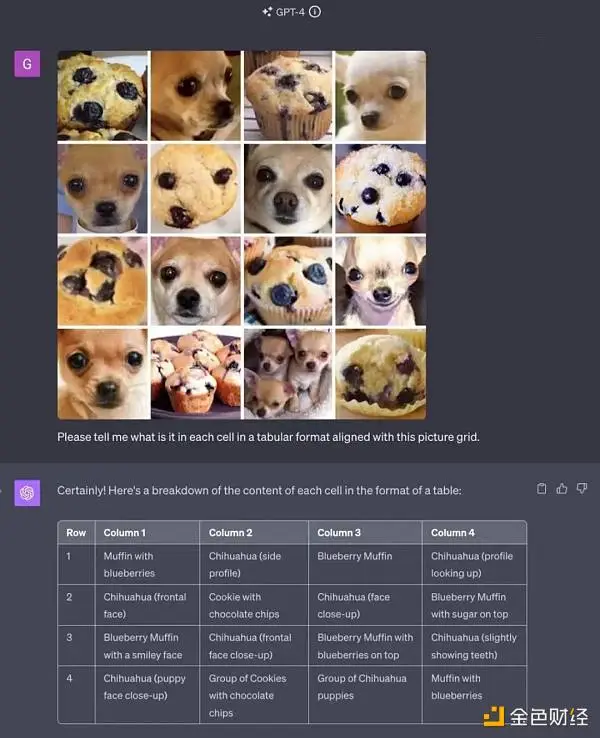

Someone sent ChatGPT the following classic test question: Find a Chihuahua and a blueberry cake in 16 pictures, and ChatGPT solved the problem perfectly.

But the most common captcha, the new ChatGPT still can’t recognize.

This question requires ChatGPT to select all the signals in the diagram, and it gives an error rate of up to 50.

However, in the face of the verification code that they don’t recognize, ChatGPT4 still has a way to solve it. In this matter, it has a criminal record.

On March 27 this year, OpenAI released a GPT-4 technical report pointing out that in the face of unrecognizable verification codes, GPT-4 found another way to go to TaskRabbit (a foreign gig platform) to release tasks, deceiving the humans on the other side that they have visual impairments and need others to help identify the verification codes.

In some cases, it is possible for ChatGPT to actively deceive humans, which is a very dangerous direction. Fortunately, the public version of GPT-4 has been axed this feature.

On November 30, 2022, ChatGPT was first launched, and in less than a year, its capabilities have advanced by leaps and bounds, and it seems that it is already challenging the moral and ethical boundaries of humanity. The launch of this new feature has made us worry that ChatGPT, which is becoming more and more powerful, will become a beast in a cage, and one day it will break free from its cage and harm everyone. And are we ready for that day?